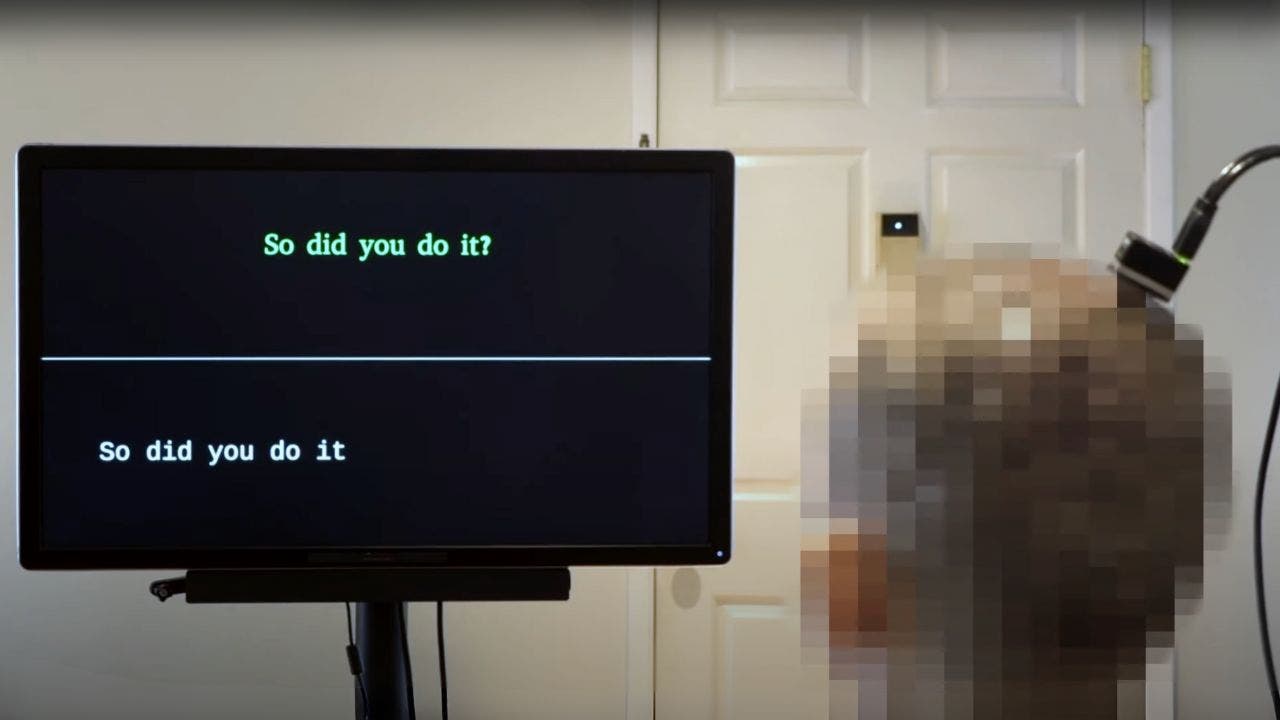

AI system restores speech for paralyzed patients using own voice

Researchers in California have made a groundbreaking achievement with an AI-powered system that restores natural speech to paralyzed individuals in real time using their own voices. This innovative technology, developed by teams at UC Berkeley and UC San Francisco, combines brain-computer interfaces (BCI) with advanced artificial intelligence to decode neural activity into audible speech. The system was specifically demonstrated in a clinical trial participant who is severely paralyzed and unable to speak.

The system utilizes devices such as high-density electrode arrays that record neural activity directly from the brain’s surface, microelectrodes that penetrate the brain’s surface, and non-invasive surface electromyography sensors placed on the face to measure muscle activity. These devices tap into the brain to measure neural activity, which the AI then learns to transform into the sounds of the patient’s voice.

The neuroprosthesis samples neural data from the brain’s motor cortex, the area controlling speech production, and the AI decodes that data into speech. This breakthrough allows paralyzed individuals to communicate their needs, express complex thoughts, and connect with loved ones more naturally.

One of the key advancements of this system is its real-time speech synthesis capability. The AI-based model streams intelligible speech from the brain in near-real time, reducing latency in speech neuroprostheses. This streaming approach enables rapid speech decoding, similar to devices like Alexa and Siri, enhancing speed and accuracy.

The technology aims to restore naturalistic speech, allowing for more fluent and expressive communication. Additionally, the AI is trained using the patient’s own voice before their injury, generating audio that sounds like them. In cases where patients have no residual vocalization, a pre-trained text-to-speech model and the patient’s pre-injury voice are used to fill in the missing details.

The impact of this technology on individuals with paralysis and conditions like ALS is significant. It has the potential to improve their quality of life by giving them a voice and enabling effective communication. The next steps for researchers include speeding up the AI’s processing, making the output voice more expressive, and exploring ways to incorporate tone, pitch, and loudness variations into the synthesized speech.

Overall, this AI-powered system represents a major advancement in the field of brain-computer interfaces and artificial intelligence. It offers new hope for individuals with paralysis, providing them with the ability to communicate and express themselves more naturally. The future looks promising for the continued development and integration of this technology into real-world applications.